Endlich: Das neue Lenovo Yoga Aura unter #NixOS ist fertig eingerichtet.

Spannender Nebeneffekt der Bastelwoche: Voll im Fokus, null Ablenkung durch YouTube oder TV. Die „Katze“ schnurrt jetzt exakt nach meinem Willen.

Im Sinne der #Datensouveränität laufen alle Backups vollautomatisiert und DSGVO-konform in die deutsche Cloud von @mailbox_org.

Nächste Ziele:

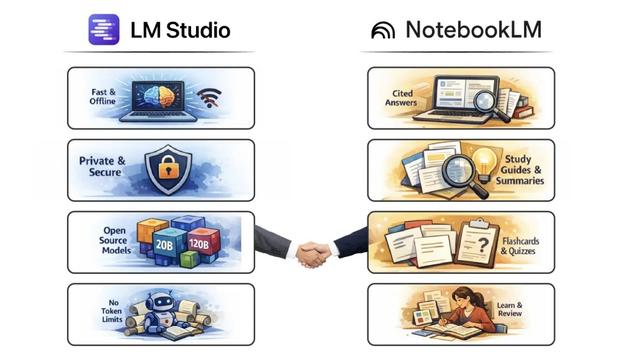

- Lokale LLMs in #LMStudio auf Verwendbarkeit prüfen und damit den nächsten Faden der US-Abhängigkeit kappen.

Bei #GrapheneOS die Hardware-Roadmap abwarten.

Schritt für Schritt raus aus den goldenen Käfigen.

#UnplugTrump #Unplugbigtech #did #diday